Note: This post is a cross-post of an article written for Upstream blog to make sure DMP Tool followers are aware of these important changes. Please refer to that site as the version of record; DOI: 10.54900/fbq63-61s08

As stated in a prior post, we will be adding the updated NIH and NSF forms to the DMP Tool and expect to have both available by the end of the month.

Over the past decade, there has been an international effort across the research community to make data management and sharing plans (DMSPs, also called DMPs) more than static, narrative documents. Through work on machine-actionable DMPs (maDMPs), shared metadata standards, and integration with research infrastructure, the goal for a growing number of groups around the world has been to make DMPs more structured, more connected, and more meaningful across the research lifecycle.

This work has led to real progress. DMPs are increasingly seen not just as compliance requirements, but as part of a broader ecosystem that connects researchers, institutions, repositories, and funders. The idea that DMPs should be interoperable, reusable, and able to support downstream workflows is now more widely accepted than ever.

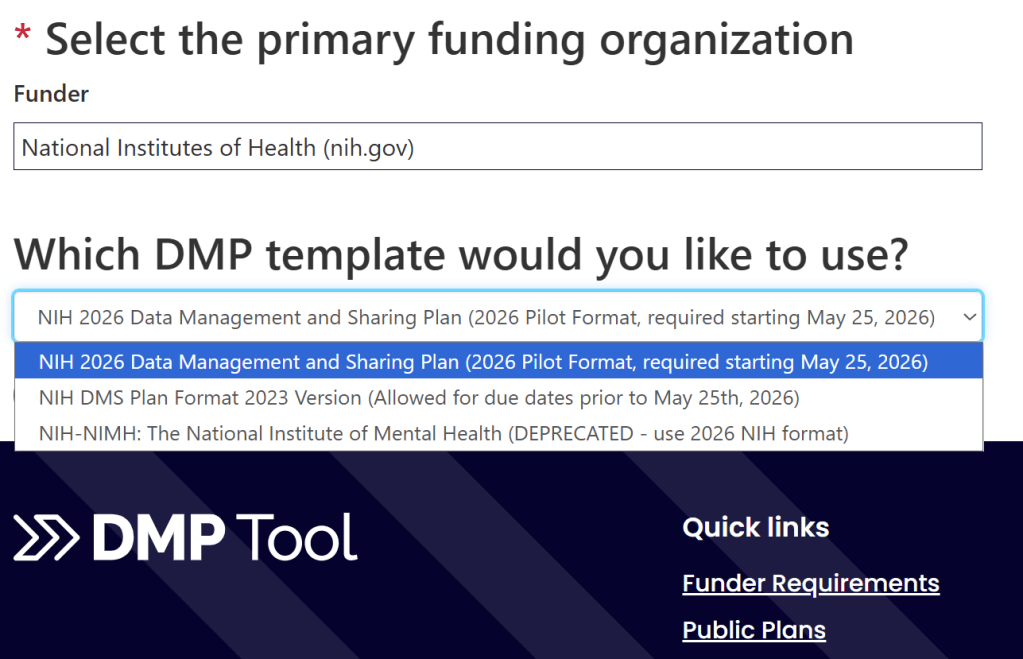

At the same time, recent developments from the National Science Foundation (NSF) and the National Institutes of Health (NIH) suggest a shift in how this vision is being implemented. Both agencies are moving away from free-form narrative plans toward more structured formats. NSF has announced that, starting April 27, 2026, their DMPs will be completed directly within Research.gov as a webform, while NIH is introducing a revised template for their DMSPs beginning May 25, 2026 that emphasizes structured responses and simplified inputs.

We have recently outlined these changes in a post on our DMP Tool blog, and in many ways, these changes reflect the direction the community has been advocating for. But they also raise an important question: as DMPs become more streamlined and embedded in funder systems, how do we ensure they remain interoperable, collaborative, and connected to the broader research data ecosystem?

Improvements in the DMP landscape

Many of the recent changes from funders reflect directions that the community has been actively working toward for years. Efforts around maDMPs, shared metadata standards, and stronger connections between planning and outputs have all been grounded in a common goal: to make DMPs more structured, more usable, and more integrated into the research lifecycle. In that context, the move away from free-form narrative plans toward more structured formats is both expected and welcome.

Several aspects of the evolving landscape stand out as particularly positive:

- Moving toward structured questions helps reduce ambiguity and brings greater consistency to how plans are created and reviewed.

- A clearer expectation that data should be shared, with exceptions requiring justification, reinforces a shift from recommendation to norm.

- Embedding DMP creation into proposal systems meets researchers where they are and has the potential to reduce administrative burden at the point of application.

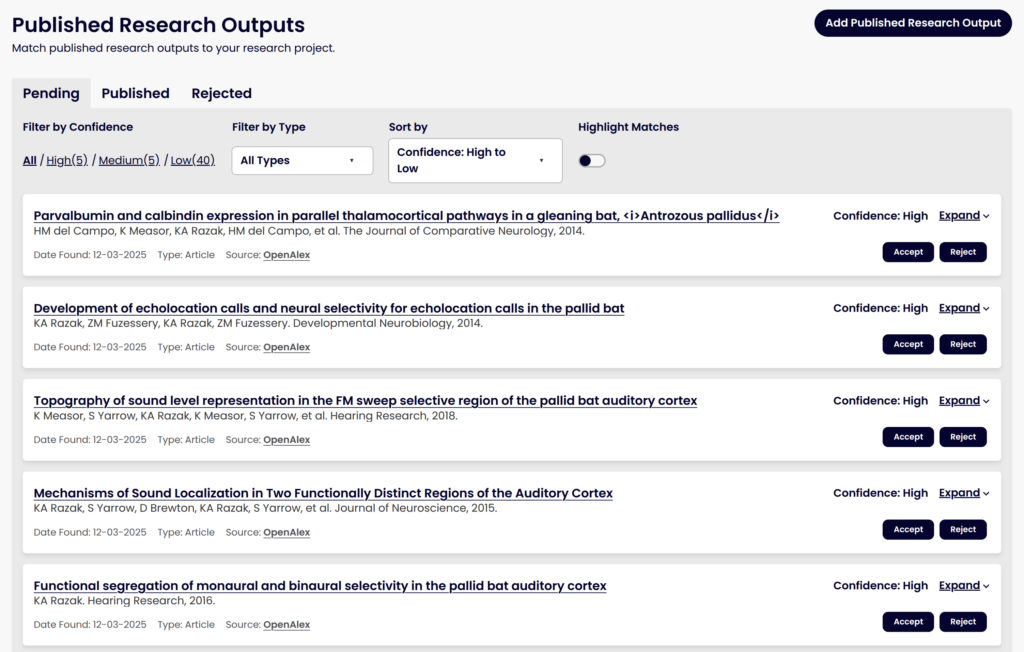

There is also a broader opportunity here. More structured plans make it easier to connect DMPs to downstream activities, including tracking data sharing over the course of a project and linking plans to outputs such as datasets, repositories, and related identifiers. These are areas where the community has invested significant effort, through initiatives such as maDMPs, DMP IDs, and tools designed to support more dynamic and reusable integrations.

Taken together, these changes signal real progress. They suggest that funders are not only encouraging data sharing, but also rethinking how planning can better support it in practice.

At the same time, as these ideas move from principle to implementation, new questions begin to emerge. The benefits of structure, simplicity, and integration depend on how well they connect to the broader ecosystem and whether they continue to support meaningful, collaborative planning. These are the areas where the details of implementation will matter most.

Changes at NSF

Recently, NSF has moved toward a structured, webform-based DMP. While the full form has not yet been released, it is expected to include a set of core questions covering familiar elements of data management planning:

- What kind of data is being shared

- What concerns limit the sharing of data and why

- What is the format of the shared data

- Where will it be shared

- For how long will it be available

- What is the source of the data

- Who is responsible for managing the data

This shift toward structured input is an important development. It brings greater consistency to how plans are created and reviewed and aligns with long-standing efforts to make DMPs more machine-readable and actionable. At the same time, the decision to implement this form within Research.gov introduces a new set of questions about how these plans will connect to the broader research data ecosystem.

maDMPs have been developed with the goal of enabling information to move between systems, supporting workflows that extend beyond the point of proposal submission. As NSF stated in a past Dear Colleague Letter:

A machine-readable document allows a computer program to interpret the DMP, such as to prepare a data repository for an eventual deposit of a large or complicated dataset….A benefit of DMP tools for researchers is that they can generate both a PDF version of the DMP that is suitable for inclusion in a grant proposal and a machine-readable version suitable for sharing with an intended recipient data repository or the researcher’s home institution.

If DMPs are created and maintained entirely within a closed system, without mechanisms such as APIs or support for interoperable formats, it becomes more difficult to realize this vision. Rather than flowing across systems, key information may remain siloed, requiring researchers or institutions to recreate plans in other environments in order to support downstream use. This not only introduces additional effort, but also increases the risk that multiple versions of a plan diverge over time.

There are also implications for the broader infrastructure that has been developing around DMPs. Persistent identifiers such as DMP IDs, along with shared metadata standards developed through efforts like the Research Data Alliance, are intended to support discovery, tracking, and integration across the research lifecycle. If DMPs created in funder systems cannot easily be registered, exported, publicized, or linked to these services, an important layer of connectivity may be lost and some of the core principles of maDMPs are not realized.

Finally, the shift to a funder-hosted form changes how DMPs are created in practice. Data management planning is often a collaborative process, involving researchers, librarians, and institutional support staff. External tools and shared documents make it easier to iterate on plans, incorporate guidance, and ensure alignment with institutional policies and available resources. When plans are created directly within submission systems, that collaborative process can become more difficult, which may reduce opportunities for support and lead to plans that are harder to implement in practice.

NSF’s approach reflects important progress toward more structured and usable DMPs. At the same time, it highlights the importance of ensuring that structure is paired with interoperability, so that DMPs can function not only within funder systems, but across the broader ecosystem they are intended to support.

Changes at NIH

NIH has updated their DMSP template to reflect a different, but equally important, shift in approach. Unlike NSF’s webform, the NIH plan will still be created outside of a submission system for now, allowing researchers to use tools such as the DMP Tool and to collaborate more easily with institutional partners (though some discussions indicate NIH may consider a webform in the future). This supports many of the goals the community has been working toward, including integration with existing tools, the ability to register and reuse plans, and more flexible, collaborative workflows.

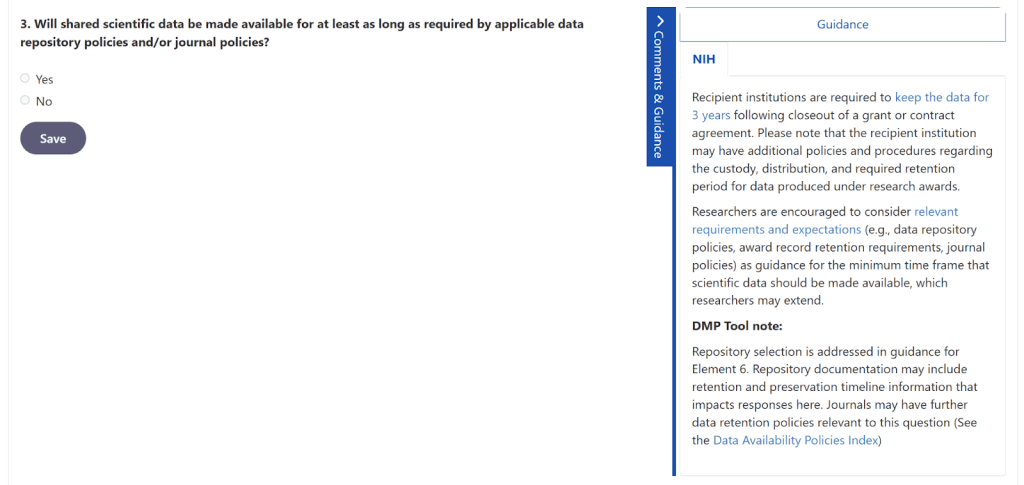

The NIH’s emphasis seems to be on creating a streamlined, structured format, which is understandable. By focusing on a small number of core questions, primarily centered on whether data will be shared, where it will be shared, and what outputs are expected, their new template reduces the burden on researchers at the proposal stage and aligns with broader efforts to simplify the DMP process and more easily track compliance with data sharing.

At the same time, this simplification introduces a different kind of tension.

Data management plans are most effective when they prompt researchers to think prospectively about how data will be managed throughout the lifecycle of a project. As stated by NIH regarding the 2023 policy:

Prospectively planning for how scientific data will be managed and ultimately shared is a crucial first step in optimizing the reach of data generated from NIH-funded research. Investigators and institutions are encouraged to consider these crucial elements early in research planning.

A more minimal template may make it easier to complete a plan, but it may also reduce the extent to which researchers engage with these aspects of planning. When the primary interaction becomes confirming that data will be shared, there is a risk that important details are deferred until later in the project, when options may be more limited and challenges more difficult to address. Key elements such as metadata, standards, preservation, and access will be less likely to be considered in advance, leaving researchers less positioned to produce data that is usable by others.

There is also a subtle shift in how researchers interact with institutional support. One of the benefits of more detailed DMSPs has been the opportunity for researchers to engage with data librarians and stewards, who bring expertise in policies, repositories, and best practices. A simplified form may reduce the need for that engagement, which lowers burden, but may also reduce access to guidance that helps ensure plans are both compliant and achievable.

NIH’s approach creates a challenge not about interoperability, but about maintaining the role of DMPs as meaningful planning tools. The move toward simplicity is an important step in reducing friction, but it also raises the question of how to preserve the depth of planning that enables effective data sharing in practice.

What we’d like to see

Taken together, these changes from NSF and NIH reflect progress and also highlight an important inflection point. As DMPs become more structured and more embedded in funder workflows, the next question is: how do we ensure they remain connected to the broader ecosystem they are intended to support?

Focus on Interoperability

One area where this alignment becomes especially important is interoperability.

Supporting mechanisms such as APIs, along with the ability to import and export DMPs in structured, machine-readable formats, allows each plan created to connect with institutional tools, repositories, and other parts of the research lifecycle. This would preserve the benefits of webform-based submission, including structured input, integration with proposal systems, and funder-side tracking, while also enabling the kinds of workflows envisioned through machine-actionable DMPs.

In practice, this could support multiple pathways for researchers. Some may choose to complete a plan directly within a funder system, while others may develop it in a tool such as DMP Tool or a similar service and submit it through interoperable formats. Institutions could build integrations that allow DMPs to be shared across systems, reducing duplication of effort and improving consistency between planning and implementation.

More broadly, enabling access to DMPs through APIs would allow the ecosystem to build on them. Institutions could connect plans to grant management systems, track compliance with data sharing commitments, and provide targeted support to researchers working with complex data. Connections to persistent identifiers and other research infrastructure would further strengthen the ability to discover, link, and reuse data over time.

Pre- and post-award versions of DMPs

A second area for consideration is how DMPs are used across different stages of the research lifecycle.

There is a strong case for distinguishing between planning at the proposal stage and planning after funding has been awarded. A lighter-weight, structured plan at the application stage can support review and reduce burden for both applicants and reviewers. At the same time, more detailed planning is often most valuable once a project is funded, when researchers have greater clarity about their data and stronger incentives to ensure their plans are actionable.

This staged approach is already used in other contexts such as Horizon Europe, where an initial statement of intent is followed by a more comprehensive plan developed after funding. Applying a similar model here could balance efficiency with effectiveness: keeping proposal requirements streamlined while ensuring that funded projects benefit from more thorough, collaborative planning.

Such an approach would also better align with institutional support structures. Libraries and data support teams could focus their efforts where they are most impactful, working closely with funded projects to develop plans that reflect available resources, appropriate repositories, and relevant standards. Providing a defined window after funding to complete this work would allow researchers the time and context needed to engage meaningfully with the process.

Taken together, these directions point toward a model where DMPs are both simpler and more connected: easy to create at the point of application, but also interoperable, extensible, and capable of supporting the full research lifecycle.

Conclusion

The recent updates from NSF and NIH mark an important moment in the evolution of data management planning. They reflect many of the directions the community has been working toward, including greater structure, clearer expectations around data sharing, and efforts to reduce burden at the point of application. At the same time, they highlight how much the details of implementation matter.

Data management plans should not be static compliance documents. Their value lies in supporting thoughtful, collaborative planning across the research lifecycle and in connecting that planning to the systems that enable data to be shared, discovered, and reused. When planning becomes more lightweight or more isolated, there is a risk that these connections weaken over time. The impact of that shift may not be immediately visible, but it can emerge later in the form of data that is harder to interpret, less consistently structured, and more difficult to integrate into broader workflows.

Because NSF and NIH play such a key role in the US and global research communities, their approaches are also likely to influence others. This creates both risk and opportunity. If new models emphasize simplicity without connectivity, fragmentation may increase. If they successfully balance structure, interoperability, and meaningful planning, they can help establish a stronger foundation for the next phase of research data infrastructure.

The path forward does not require choosing between reducing burden and supporting richer, more connected planning. The elements needed to do both are already visible: structured, machine-readable inputs; flexibility in how plans are created and shared; interoperability across systems; and a distinction between early-stage commitments and more detailed, post-award planning.

Bringing these elements together would allow DMPs to function as intended: not just as part of the application process, but as living components of the research lifecycle that support data sharing in practice. As these changes continue to evolve, there is an opportunity for funders, institutions, and the broader community to work together to ensure that DMPs remain both usable and meaningful.

Copyright © 2026 Becky Grady, Maria Praetzellis. Distributed under the terms of the Creative Commons Attribution 4.0 License.

Note: This post is a cross-post of an article written for Upstream blog to make sure DMP Tool followers are aware of these important changes. Please refer to that site as the version of record; DOI: 10.54900/fbq63-61s08